From January 21st to January 28th 2016, Apple ran a promotion called Get Productive on its App Stores. Yoink, my app to improve and simplify drag and drop on the Mac, participated.

This is my experience with the promo.

Get Productive – Mac App Store Promotion

Get Productive with First-Class Mac Apps

Apple has launched a promotion on both the iOS App Store and the Mac App Store and I’m happy to be able to tell you that Yoink is part of it 🙂

Here’s a partial list of apps included in this Mac App Store Promotion:

- Yoink ($2.99 instead of $6.99)

- 1Password ($24.99 instead of $49.99)

- Things ($24.99 instead of $49.99)

- 2Do ($24.99 instead of $49.99)

… and many more 🙂

9to5mac has some more information and also lists the deals available to you on the iOS App Store – you can check them out here!

Yoink – 57% off for a limited time as part of the Mac App Store’s Get Productive promotion!

Enjoy 🙂

Yoink Automator Workflow: Add Last Saved File to Yoink

Yoink users have been automating adding various files to Yoink via Automator Workflows for a while now – from adding mail attachments or screenshots, to adding files from the Terminal.

Douglas (@douglasjsellers on twitter) today adds to this list of wonderful workflows an Automator Workflow that lets you quickly add files that you created/saved recently.

Here’s what he says about it:

I cooked up my Automator Service that lets you send the last file that you saved (from any application) to Yoink.

When bound to a key combination this allows you to do things like “Export to Web” from Adobe Photoshop, hit the key combo and then the newly created png is on Yoink.

Or say you’re editing a file in Emacs and you want to add it as an email attachment. You save the file, hit the key combo and the file will then be in Yoink for easy dragging into your email.

I also use it heavily to get recently downloaded files from chrome to Yoink.

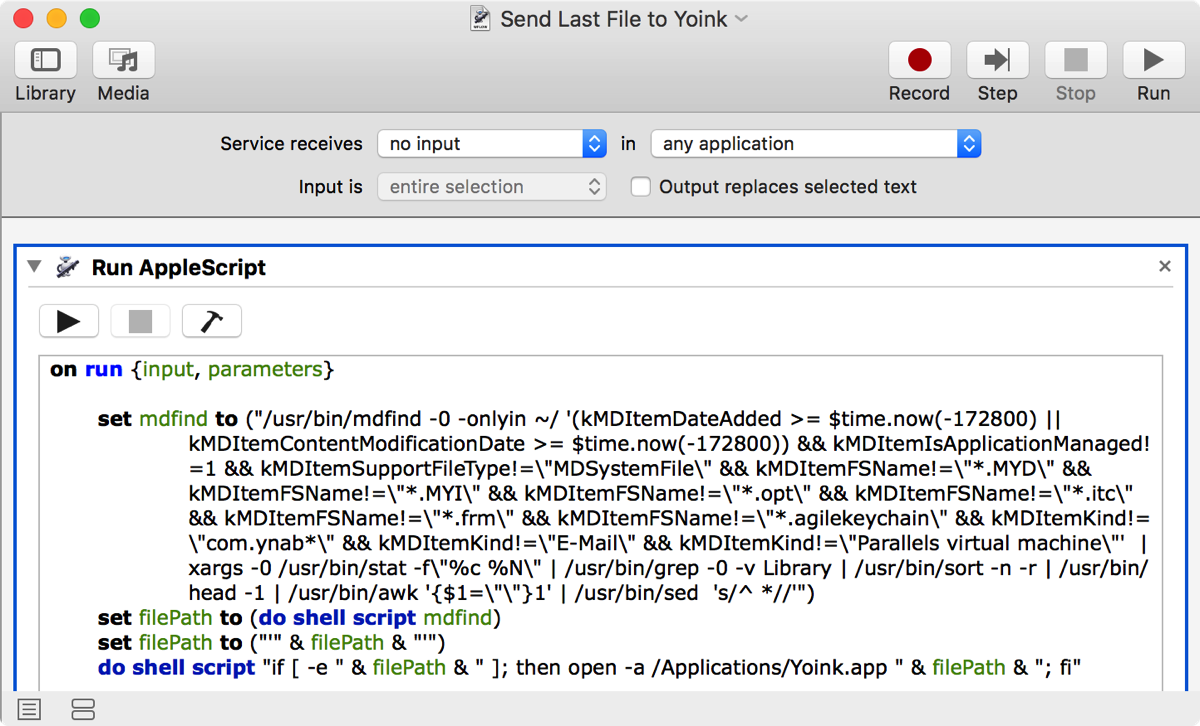

The Automator Workflow

The main part of this workflow consists of a complex shell script that finds files that were recently saved, excluding files that are less likely to be needed in Yoink – obviously, this is something everybody needs to configure for themselves, but since this is an Automator Workflow, it is easily done.

Download

The Automator Workflow is available for download here (~130 KB).

My thanks to Douglas for his awesome work.

Installation & Keyboard Shortcut Setup

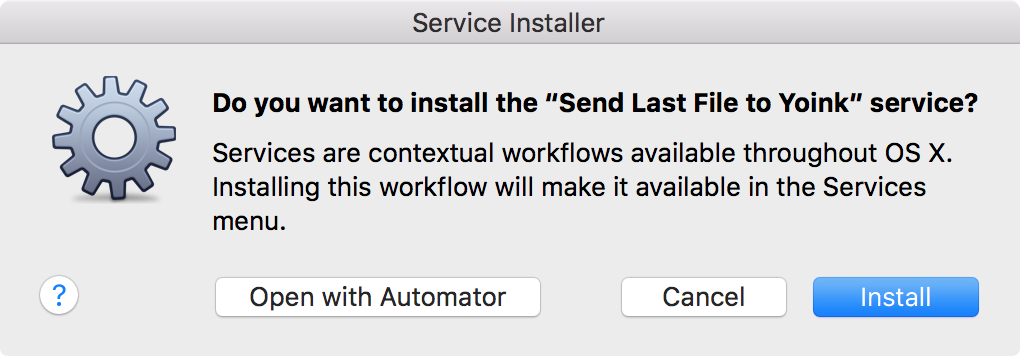

To install this workflow, download it from above, unzip it, double-click it and click on Install when this dialog comes up:

Click on Install if you’d like to install the service, click on Open with Automator if you’d like to make changes

Click on Install if you’d like to install the service, click on Open with Automator if you’d like to make changes

To create a keyboard shortcut for this service:

- Launch System Preferences

- Click on Keyboard -> Shortcuts -> Services

- Find ’Send Last File to Yoink’ in the list, under ‘General’

- Click on ‘add shortcut’ and enter the shortcut you’d like to use to activate the service

If you have any feedback regarding this workflow or if you’d like to share a workflow of your own, please be sure to get in touch either via twitter or eMail. Thank you and enjoy 🙂

Black Friday Sale 2015

I’m participating in TwoDollarTuesday’s Black Friday sale with some of my apps (MacStories has great coverage of over 200 deals as well) :

![]() Yoink

Yoink

Simplify and improve drag and drop

40% off – Mac App Store; Website

ScreenFloat

ScreenFloat

Create floating screenshots to keep references to anything visible on your screen

28% off – Mac App Store; Website

![]() Transloader

Transloader

start downloads on your Mac remotely from your iPhone or iPad

30% off – Mac App Store; Website

![]() Glimpses

Glimpses

turn your photos and music into stunning still motion videos

40% off – Mac App Store; Website

I hope you’ve had a great Thanks Giving and are enjoying the Black Friday Sale that’s going on 🙂